It's better to have less, correct, cameras than a lot of "accepted", wrong cameras in your workspace. When you get "blobs" it's generally because you have wrongly oriented cameras.

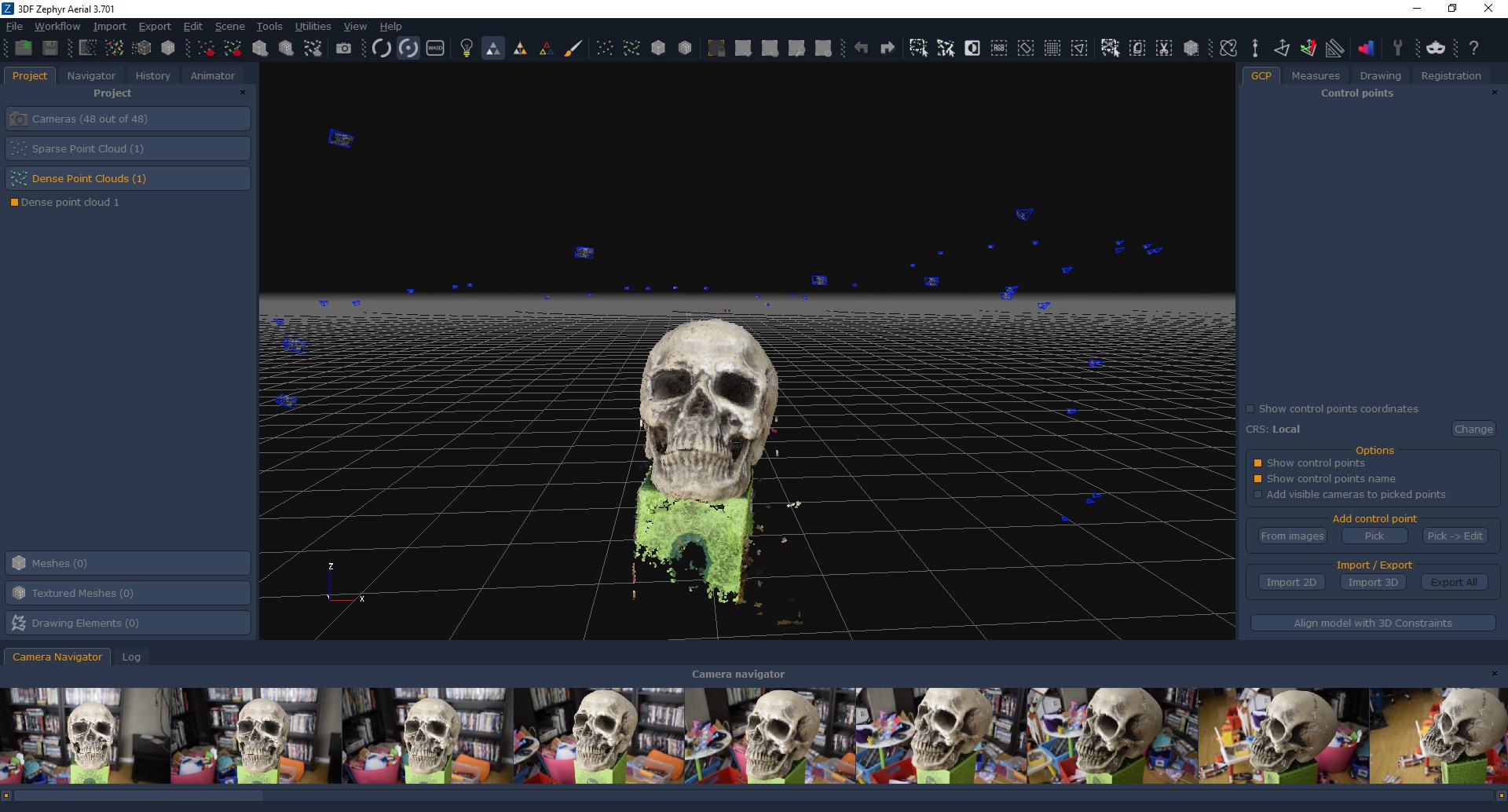

I used the beta but I am sure you can get very similar results using 4.530 I understand that it's not possible to get more images for this specific dataset, but the correct way would be to take more (and better suitable for photogrammetry) images. Unfortunately only 32 images were oriented and i doubt it's possible to get better results without resorting to control points. That's what i did with general/deep and then urban/deep.

Note that when you have clearly wrong cameras orientation it's better to completely change the preset, try with one that orient less images (but correctly) and eventually try orienting those that were not oriented using a different preset. In this case, I was unable to orient the full dataset in one shot.

I strongly suggest against any type of preprocessing.Įventually, just use the processed images for texturing only if they look better (workspace / change images after you have generated a mesh you are happy with) I would avoid upscaling, as AI software will generally smooth it, removing keypoints in the process.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed